AI in multifamily is no longer experimental.From leasing automation to maintenance coordination and resident c ommunication, AI tools are quickly becoming embedded in property management operations. But as adoption accelerates, many operators are asking the same question:

How do you properly evaluate multifamily AI vendors?

Traditional software evaluation methods don’t work for AI. And relying on polished demos can lead to expensive mistakes. So we created a practical framework for evaluating AI in multifamily that's built around what actually predicts performance in production, not just "AI that chats."

.svg)

Why Multifamily AI Requires a Different Evaluation Approach

Most property management software is evaluated on: features, integrations, user interface, and pricing. AI is different.

AI systems must handle:

- Ambiguous questions

- Policy exceptions

- Emotional conversations

- Multi-step workflows

- Cross-channel interactions

If you’re evaluating AI leasing automation or resident AI tools, these factors matter more than surface-level polish.

The Three Stakeholders AI Must Serve

Strong multifamily AI supports three groups:

1. Residents

Consistent, accurate responses across voice, text, and email.

2. Onsite and Centralized Teams

Reduced workload and fewer unnecessary escalations.

3. The Business

Improved occupancy, operational efficiency, and compliance.

A proper AI vendor comparison should account for all three — not just cost savings.

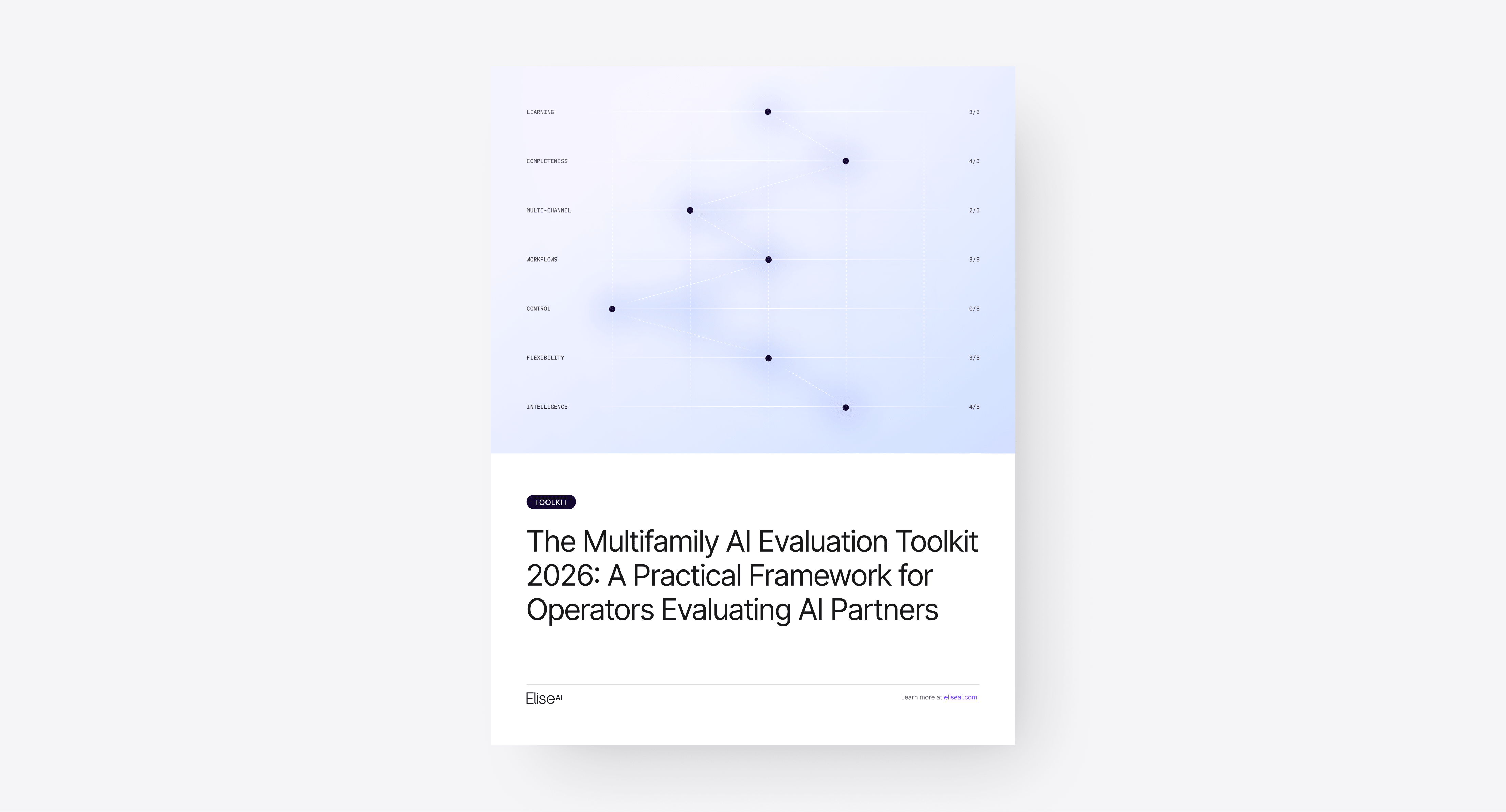

7 Principles for Evaluating AI in Multifamily

When comparing property management AI platforms, these seven principles consistently predict long-term performance:

1. Learning

Does the AI identify knowledge gaps and improve over time, or does it require constant manual updates?

2. Completeness

Does it cover the full renter lifecycle — leasing, maintenance, renewals, collections — with shared context?

3. Multi-Channel Capability

Does it perform reliably across voice, text, and email, retaining context between channels?

4. Workflow Execution

Does it complete multi-step tasks like tour scheduling, work order creation, and follow-up, or does it stop at message generation?

5. Operational Control

Can operators configure behavior by property, asset type, or owner requirements?

6. Proof of Performance

Is the AI deployed at scale with referenceable operators and measurable results?

7. Vendor Velocity

Is the vendor iterating quickly and incorporating customer feedback?

These principles move multifamily AI evaluation beyond marketing claims and toward operational substance.

How to Run a Structured AI Vendor Evaluation

To evaluate AI vendors effectively:

- Include cross-functional stakeholders (Ops, Leasing, Maintenance, IT)

- Test real historical scenarios — not vendor-curated examples

- Intentionally test failure cases

- Score vendors independently before group discussion

- Define what earns a 1 vs. a 5 before demos begin

Structure reduces bias. It also ensures vendors are compared apples-to-apples.

Don’t Skip Internal Readiness

Evaluating AI for property management isn’t just about the vendor.

Operators should also assess:

- Operating model (onsite, centralized, hybrid)

- Policy documentation quality

- Query complexity distribution

- Channel mix (voice vs. text vs. email)

Many AI implementations struggle not because the technology fails, but because readiness wasn’t assessed upfront.

Raising the Standard for Multifamily AI Evaluation

As AI adoption grows, the industry benefits from clearer standards around how we evaluate AI vendors. Choosing the right multifamily AI platform requires more than a compelling demo. It requires structured testing, clear scoring criteria, and a focus on operational outcomes.

Because ultimately, AI shouldn’t just sound intelligent. It should reliably improve day-to-day operations across your portfolio.