Like most engineering teams, we have spent the last year rebuilding our interview from scratch. The whiteboard problem is gone, good riddance. What we replaced it with was meant to look like the actual job: a realistic problem, messy data, an API key, roughly the setup a candidate would have on day one. Two hours, alone, demo at the end. It was the most honest screen we'd ever run. Then Claude Code one-shotted it.

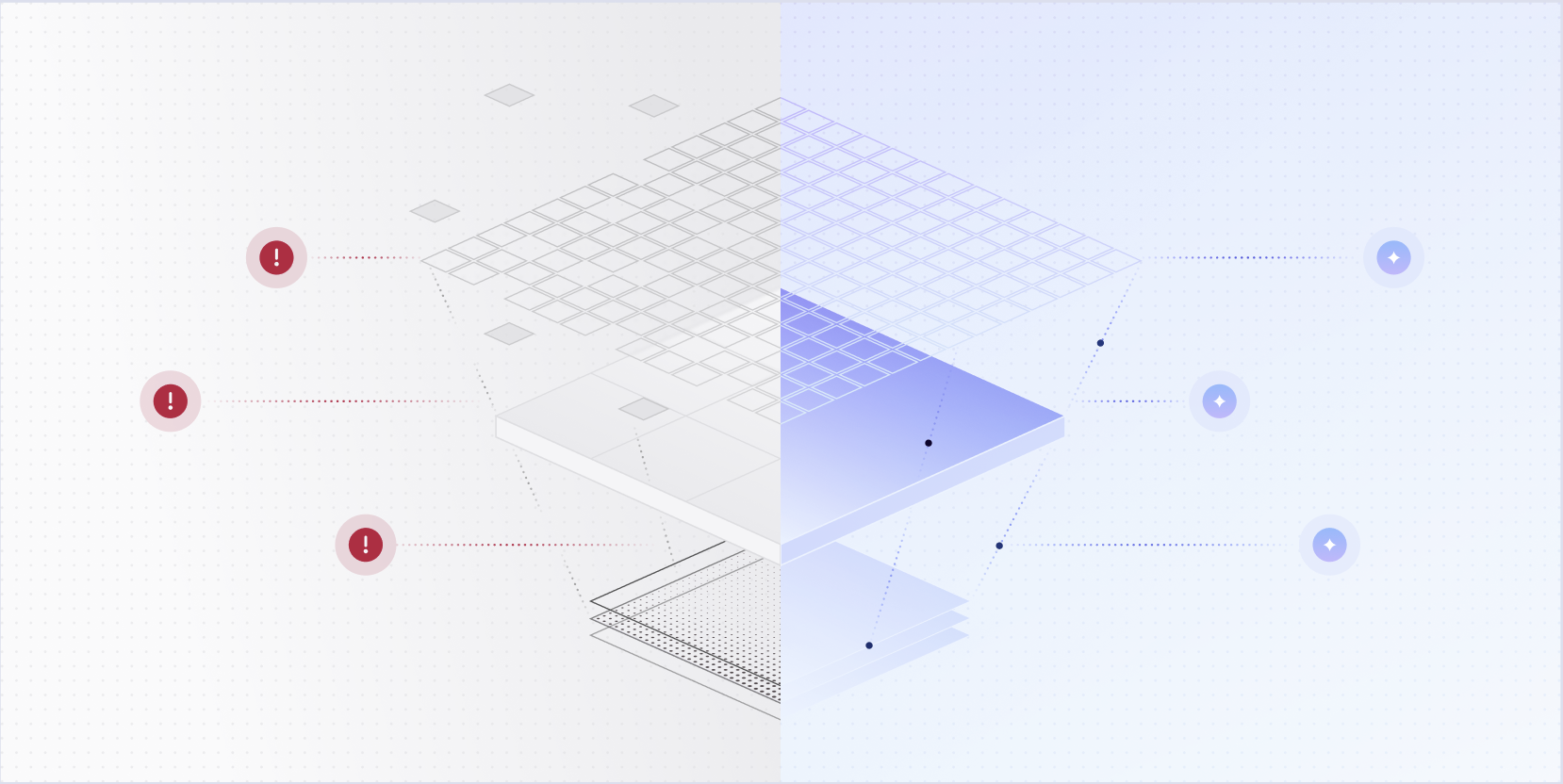

So we stopped hiring engineers for coding ability. If the thing you're screening for can be measured cleanly, some agent is eventually going to one-shot it — which means an interview built around cleanly measurable skills is, almost by definition, screening for nothing worth screening for.

The traits with the most leverage run the other way. Whether someone builds for a world where the models keep improving. Whether they treat a good-looking model output with the skepticism it deserves. Whether they go deep into the domain when the domain is messy and specific and nobody's written it down. These are the things that resist measurement, which is more or less exactly why they're the ones still worth hiring for — and exactly what working with models well actually requires. We rebuilt the interview around them. Here's what we hire for instead.

.svg)

Build for the next model

The first thing we look for is whether a candidate builds in a way that gets better as the models get better. The model layer is the fastest-moving part of the stack. If your product doesn't absorb those gains automatically, you're signing up for a lot of rewrites.

Like every vertical AI company, we constantly face problems that involve pulling structured data out of unstructured sources. A good example: we had to ingest large data dumps for our lease audit product — leases, ledgers, resident records, a lot of it handwritten, none of it in any consistent format.

A reasonable first instinct is to write a classifier for each file type and hard-code the domain knowledge for each one — this ledger uses this date format, this lease template puts the rent amount in this section. The trouble is that you overfit to whoever's data you built the pipeline around. The next customer's files look slightly different and the whole thing starts breaking. You're also betting on your ability to enumerate the world faster than the models can learn it, which is not a bet you want to make.

We took a different approach. We built a pipeline where an agent does the extraction work inside a sandbox, with access to a file system and tools like grep, and careful prompting around what to do and how to verify. It ended up writing parsing scripts for the Excel sheets it ran into — sort of like what we would have done, except tailored to the specific sheets in front of it, rather than one script with a forest of branches for every spreadsheet format we'd ever seen.

The hand-engineered pipeline would have stayed roughly as good as the day we shipped it. The agent version gets better every time the models do, without us touching it.

Evals, Evals, Evals

A model produces a good-looking result, someone takes that as evidence that the problem is basically solved, and someone builds the rest of the system on top of that. Which would be fine, except that the good-looking result and the reliable system are usually separated by a lot of work, and most of that work is where the problem actually lives.

Take the lease audit agent from earlier. The first time we ran it end-to-end on a real customer dump, it produced a confident, well-formatted output. It looked great. If we'd shipped based on that one run, we'd have shipped something broken. When we ran it across a proper eval set, the failures showed up. At the top of the pipeline, the OCR had holes — it wasn't catching everything. At the bottom of the pipeline, the reasoning broke on the domain-specific stuff. We spent the next few weeks fixing those, and it works now — on data we've never seen, not just the dump we built it on. But the point is that one good demo told us almost nothing. The evals told us what the system actually did.

The people we trust most hold these first two instincts in tension. They build like the models are going to keep getting better, and they also refuse to believe that any particular thing a model did today means what it looks like it means. These pull in opposite directions. The ambitious person sees something working and wants to ship. The skeptical person sees something working and wants a thousand more data points. You want neither. The ambitious-only ones ship things that break. The skeptical-only ones never ship at all. You want the person who sees something working, lets themselves be impressed, and then immediately starts asking whether they should be.

Get close to the problem

The third thing we look for is the kind of person who, handed a messy domain they know nothing about, immediately starts trying to figure out how it actually works.

The leverage on domain knowledge just changed. The models will write the code, and they'll be good at it, but they have no way of knowing which details actually matter — those details aren't in their training data, and they have to come from whoever's directing them. The person who's sat with the actual problem ships something that works; the person who hasn't ships something that looks like it works.

Here are some things I've learned on the job:

Obviously you need two people to carry a fridge off a truck. Less obviously, your scheduling system needs to know that. Patching a hole in a wall is three visits, not one, with drying time between each. A lease isn't a fixed roster — residents on the same unit can move in and out at different points. Some pets are named Killer. (This is not actually useful for building, but it's too good not to share.) If a resident's handwriting is bad enough, a model will cheerfully decide their grandson's last name is Grandison.

None of this lives in training data. It lives in the heads of property managers and leasing agents and maintenance technicians, and now a lot of folks here at Elise. An engineer who doesn't bother to go learn it will ship something that looks right to everyone except the person who has to use it.

We want people who, dropped into a domain they don't understand, go find out — who read the actual documents, talk to the actual customers, and come back with the distinctions that turn out to matter. Call it intellectual curiosity, call it caring about the problem, call it being unable to leave a weird entry in a resident's ledger unexplained.

The thing without a name

For any engineer keeping up in 2026, working with models well is most of the job. It comes down to three things: building in a way that compounds, trusting the right outputs and not the wrong ones, and bothering to understand the domain before you start directing. People keep calling it taste. I'm not sure that's the right word — maybe judgment, maybe wisdom, maybe there isn't a word for it. Which is the point.

Whatever you call it, that's what we're hiring for. And if you're an engineer, it's what you should be trying to build in yourself.